Policy Prototypes: How designers and policy practitioners can use prototypes to get feedback and iterate on policy

By Angelica Quicksey, Head of Public Spaces Incubator, New_ Public & Chris Meierling, Director of Experience Design, Medable

As designers, we cheer every time we see a government agency embrace the principles and practices of human-centered design. Humancentered design incorporates feedback from the people you’re designing for throughout the design process so you can build unified, clear, and respectful products and services. When making policy decisions for laws, regulations, or program operations, human-centered design practices like prototyping can help you test your assumptions early on to create better outcomes. In our experience, prototyping is an underexplored and under-utilized approach in the public sector. While building robust feedback loops into policy and program development practices will develop stronger policies, government agencies tend to be risk-averse and rely on ideas that maintain the status quo. Prototyping pushes them to be more expansive and test ideas that might not work. But prototyping actually reduces risk. It reveals new opportunities for improvements and success that can’t be surfaced by doing things the way they’ve always been done.

Narrowing the gap

Prototypes are most commonly used while building software. Atlassian—a company that makes collaborative software for agile development teams—defines a prototype as “an early sample, model, or release of a product built to test a concept or process or to act as a thing to be replicated or learned from.” In an agile development process, a prototype is a working piece of code that developers deploy in a matter of days or weeks. This allows developers to get feedback fast.

In the policy world, the feedback loop is much slower. Years may pass between when a policy is first created and when the public and civil servants experience the impacts of that policy. Because policy decisions are made based on the available data—data that can be years old or incomplete—assumptions are unavoidably baked into the development of any policy. The world can change significantly between when an idea is conceived and when it is deployed. The assumptions that get cemented into a program early in the process may no longer be relevant. Prototyping allows us to get feedback more quickly, so assumptions can be tested and policy decisions can be made based on up-to-date, relevant data about what people actually need.

Applying prototyping to policy

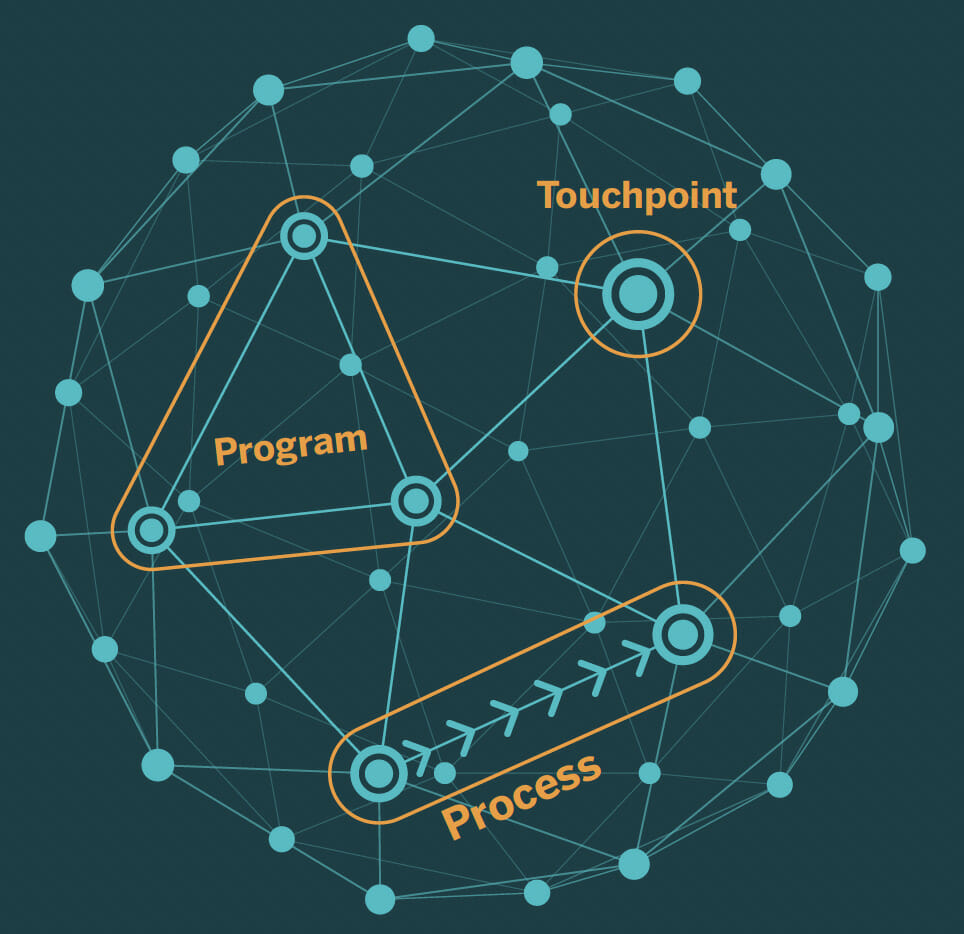

We define a prototype as an object, device, or experience that proves or disproves an assumption. A prototype should involve setting up a team to learn and iterate on their policy or the execution of that policy. Policy prototypes are tangible artifacts or experiences that make a policy real for people and allow policy-makers to test their assumptions and iterate based on what they learn. Policies create the frameworks and rules for government programs. People don’t experience a policy until it is delivered through a program, process, or touchpoint. From Medicaid health insurance (a program), to applying for affordable housing (a process), to a letter from a student loan provider (a touchpoint), policies are just words on a page until they’re delivered to the people they are meant to serve. There are three types of prototypes we’ll be discussing:

1. Program prototypes: A government program is a large set of related activities with a particular long-term aim, supported by a local, state, or federal government. Program prototypes let you test and iterate on core program components. This helps you refine and adapt program models toward their intended outcomes, rather than simply the approval or discontinuation of a program.

2. Process prototypes: A process is a sequence of steps to accomplish a task, like the process to register to vote or apply for a benefit. Often processes are part of larger programs and can be represented as a “customer journey.” Process prototypes test the steps in sequence and consider all the parties involved. They can reduce pain points, drive greater efficiency, or increase access.

3. Touchpoint prototypes: Each interaction with a government service or office can be considered a touchpoint, from a single email to a conversation with the agent at your local Department of Motor Vehicles (DMV). Touchpoint prototypes are designed to test and improve specific artifacts or moments of interaction through which people engage with your policy, program, or process.

Policy-makers can create prototypes of programs, processes, and touchpoints before they’re embedded in our everyday lives.

Testing and improving

Many policies result in programs—a large set of related activities with a particular long-term aim, supported by a local, state, or federal government. Examples of programs range from federal workforce development programs from the Workforce Innovation and Opportunity Act (WIOA) policy that distribute resources to states and counties, to city-based housing access programs like San Francisco’s affordable housing program, DAHLIA. A program prototype allows public servants, government officials, philanthropic funders, and the people directly impacted by programs to iterate—to test and improve—on these large, complex initiatives. Iterating helps build better services and inform policy-making upstream. Policymakers are likely familiar with the idea of launching a “pilot program,” a scaled back version of a larger program that either succeeds or fails. (Though they are often expected to succeed.) By contrast, prototypes are even smaller experiments, on limited feature sets, with faster feedback loops, and opportunities for improvement. Failure of a prototype does not mean program failure; it’s merely an opportunity to adapt the prototype (and program) based on the feedback gained, and try again. Given the scale, complexity, and impact of programs, program prototypes are often a collection of many, smaller prototypes: a coordinated effort of small, quick tests and iterations that support larger decisions about what a program or policy should look like. Program prototypes minimize risk by identifying potential failures or hazards early on, so the decision-makers can make adaptations before spending vast resources.

Getting feedback

From procurement and contracting processes to applying for public benefits, civil servants and the public alike engage with the government’s most important functions through processes. A process is a sequence of steps taken to accomplish a task, like the process to apply for public benefits or the process to write and issue a request for proposal (RFP). Often, processes are part of larger programs. Process prototypes can help designers and policy-makers isolate and refine the processes that crosscut their work so that they more effectively and consistently deliver on their intended outcomes. A process prototype simulates the sequence of procedures and transactions needed to fulfill a policy goal. Process prototypes test and refine all the steps in sequence with the actual people involved. By refining processes with a process prototype, civil servants and designers can improve the experience for all the people who use the process, create better program outcomes, and increase trust with the institutions that shepherd those processes.

Testing a process prototype can come through simulations, dry-runs, and walk-throughs to play out unexpected scenarios. (Government folks may be familiar with the term “table-top exercise.”) Just as software development teams dedicate days to looking for places where code will break, administrators should dedicate time to identify overlooked process points.

Program prototyping in action in California

When California passed SB 1004 in 2019, legislators laid the groundwork for five counties to experiment with new solutions to divert young adults convicted of non-violent crimes out of the adult justice system and toward more community-based and restorative supports. In response to this opportunity, Fresh Lifelines for Youth, a county juvenile probation department, and a local philanthropic organization coordinated research and design work to iterate on a new program model for young adults.

This collaborative design process brought together youth and probation department staff to inform and refine a program. It culminated in a 13-week program prototype made up of experiential learning activities such as classroom-based learning sessions and pro-social activities that emphasize knowledge of the law and self efficacy. The program prototyping process was shorter and less costly than a traditional pilot, and prioritized learning for the purpose of program refinement and advocacy over statistically significant evaluation metrics.

Within a short time frame, the program prototype resulted in promising indicators like gains in social-emotional learning skills and sustained youth participation. The prototype also showed that classroom-based learning alone didn’t satisfy the range of needs youth have during reentry. This opened up opportunities to incorporate more pro-social activities in the program model, invest in case management capacity to complement classes, and implement greater county and service provider coordination.

These successes and learnings paved the way for additional county and philanthropic funding and the opportunity to scale services to three more Bay Area counties. As new juvenile justice legislation emerged from the next legislative cycle, the initial program prototype and later full-scale pilots provided important learnings for California’s ongoing juvenile justice policy efforts. Stakeholder engagement, advocacy based on early outcomes, and incorporation of youth voice were all critical to creating a strong feedback loop between people’s experience with the program and policy makers.

Simplifying a process by removing a redundant form

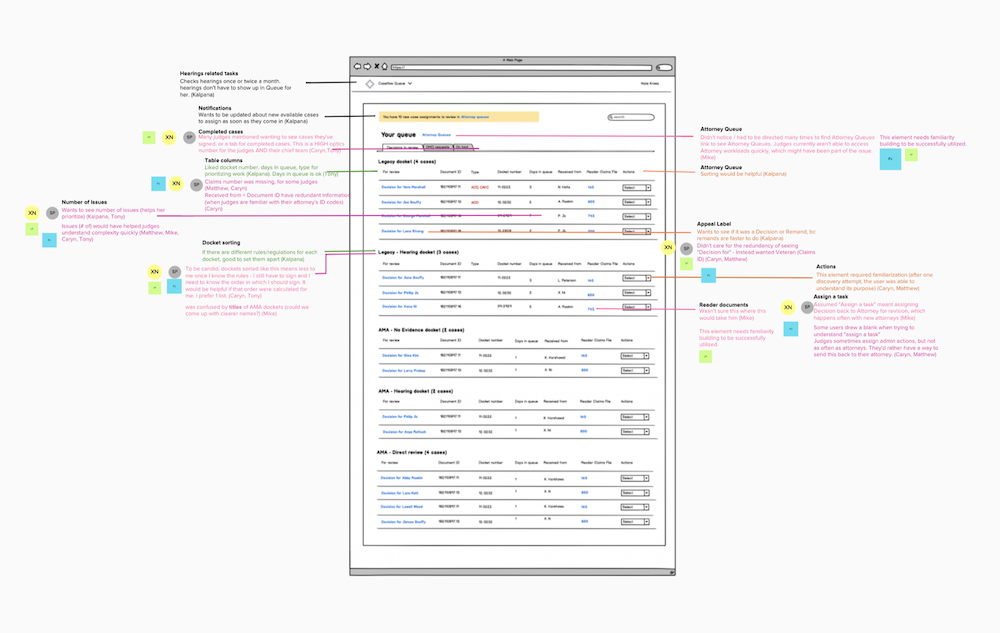

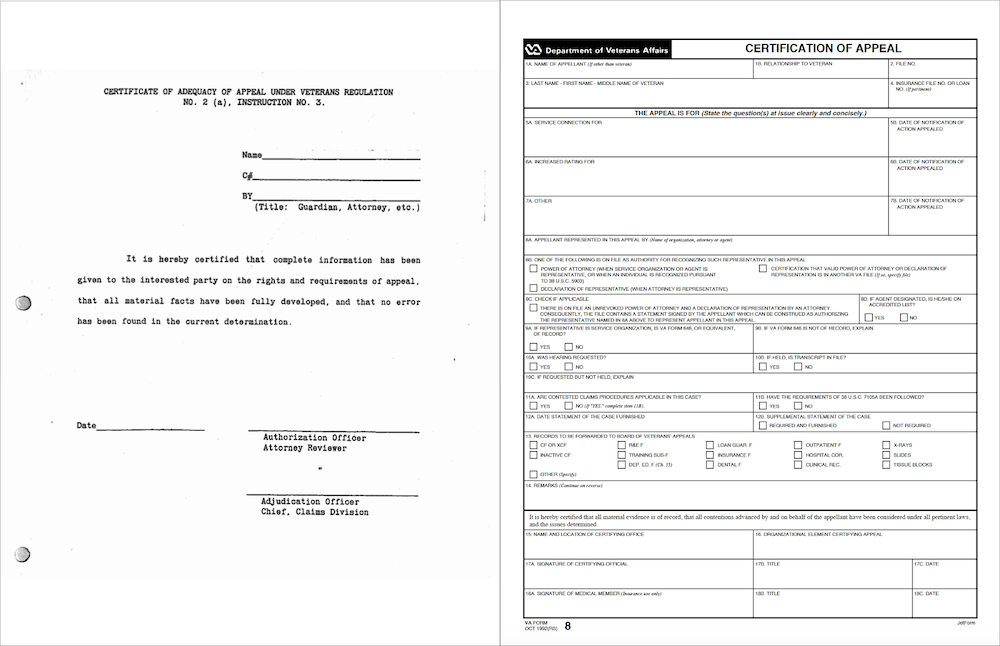

Nava prototyped a new process with the Department of Veterans Affairs (VA) and updated an 85-year-old-policy along the way. Nava designers had observed a key pain point in the process where Veterans appeal decisions made about their benefits. Inaccurate data and significant delays due to missing paperwork arose at the moment when paperwork was transferred from one VA organization to another, much of which could be traced to a single paper form, Form 8.

Alongside VA partners, Nava first prototyped a digital version of Form 8 which caused the rate of missing documents to drop dramatically—from 40 percent to 2 percent—but the data discrepancies did not change. While this first prototype improved the process, it didn’t fix the problem entirely. So, Nava went back to interview VA staff. By examining the whole data intake and review process, they discovered that the data collected in Form 8 existed elsewhere and the VA staff had to reconcile the discrepancies in the data between two legacy systems. Nava’s designers developed a second prototype that would automatically surface discrepancies to VA staff, reducing the time they spent filling out the forms and reducing the rate of data discrepancies. Ultimately, prototyping proved that Form 8 wasn’t necessary and it was removed from regulations.

In this instance, the prototypes did exactly what they should do: test assumptions and enable the team to make process changes. And they delivered better end results: a more accurate process that saves VA staff time. Creating multiple prototypes isn’t a sign of failure. Creating multiple prototypes lets you iterate—refine and test a process multiple times—until you achieve the desired goals.

Testing specific artifacts or moments

When people interact with your policy, they do so through a collection of interactions or artifacts. Each interaction or artifact is a touchpoint. A touchpoint prototype can be as simple as the language in a letter or a new application form. It’s an opportunity to make changes at these moments, test the results of those changes, and make an improvement. For example, many cities have adopted Vision Zero as a policy goal, aiming to reduce traffic fatalities and severe injuries to zero. Cities might create new street signs, introduce fines or new driving rules, or promote changes to large vehicles to make them safer. In this example, a sign is a touchpoint. The moment when a speeding driver pays a hefty fine is also a touchpoint. Both present opportunities for prototyping.

Touchpoints have even smaller scopes than program or process prototypes. Narrow in on one specific artifact or moment that you want to test and what you want to learn from it. Your touchpoint prototype should be narrow enough that you don’t have to iterate on a whole process or program.

But because your touchpoint is likely part of a larger program or process, think about it in that context. What you learn may influence other parts of the program. For example, if your new street sign doesn’t reduce injuries, you may need to try different placements or different signs altogether. So, plan to share your learnings widely early and connect with others who might benefit from them.

Best practices

Understanding best practices and emerging opportunities can help you determine when to use a prototype, who to include in the process, and how to make plans that lead to improved programs, processes, and touchpoints. Avoiding a few common pitfalls will help you roll out initiatives with respect for the public and partners and get the right kind of feedback so your efforts are more successful. As you begin prototyping to test your ideas and assumptions, also consider emerging opportunities to go beyond digital.

Applying best practices

- Understand the policy and organizational landscape before moving forward with a prototype. There may be larger or contributing issues that you aren’t aware of yet. Speaking with people throughout the agency you’re working with will reveal pain points to address. In the case of process prototypes, you can conduct a process audit across your agency to identify the business processes that have the greatest impact on the people who use or rely on them. An audit will help you identify which processes are in most need of change.

- Partner and align internally to execute prototypes. Even after you understand the landscape, it is challenging to advance change without allies and champions who are directly involved with implementing programs. These very individuals will create the critical mass for implementing new programs, processes, or touchpoints later on.

- Identify your learning goals early. Prototyping is about learning. If you created a new artifact, but you didn’t learn anything from it, it wasn’t a prototype. Identify the specific qualitative or quantitative indicators that your prototype is meant to impact. In some cases, your learning goal may simply be to understand if you’re moving in the right direction—ie. if your artifact is the right type of artifact at all. Get a baseline measurement of those indicators so you can compare the before and after. You can also co-create prototype indicators with stakeholders to ensure you gather data that matters to policymakers and public administrators and is tied to program outcomes.

- Partner with a policy expert. The policy landscape around any particular issue can change rapidly and impact how policy recommendations are perceived. Working with a policy analyst or researcher can provide valuable ongoing context for framing the recommendations that result from a prototype. If you move toward implementation, a policy expert can help connect with the champions or critics of a particular type of program or process to unblock development.

- Implement changes with a small group first and expand incrementally. Starting with a smaller group means that you initially impact fewer people and can make changes more quickly. At each stage in the roll out, you can continue to collect feedback and iterate on the process.

Avoiding common pitfalls

- Your prototype should have a potential path to permanence. A prototype is a promise; if you’re making something to be thrown away, you’re breaking that promise. Your users implicitly trust that you are using their time well and that their input matters. So, though you may abandon a prototype, you shouldn’t test an idea with users that has no potential to become permanent—especially if you are testing an artifact that impacts a vulnerable community. While new barriers can always arise in government, an ethical and effective approach to prototyping considers known barriers in advance.

- Prototypes don’t have to be perfect to get feedback. It’s easy to feel like you need the most polished, high-fidelity version of something in order to get feedback. Though it may seem counterintuitive, this is often not the case. Especially for early feedback, it can be better to test a rough version of an artifact, process, or program design. This is not only because it’s cheaper, but also because people are more willing to give feedback about something that is clearly not finished.

- Consider potential causes of failure in advance. There are many barriers a policy might encounter: a program design could have already been considered, the population served by the prototype isn’t representative, or certain circumstances might not be replicable in other contexts. Policymakers can forecast potential pitfalls beforehand by imagining the program has already failed and then thinking through what might have caused it to fail. This strategy is called running a pre-mortem. It can be a helpful step for programs that hope to influence policy because you can address the pitfalls your team identifies before implementation begins.

- Be aware of shifting policy priorities and budgets. Interest in specific programs or policy priorities can rise and fall alongside the legislative cycle or changes in government administrations. Additionally, funds and attention can shift based on changing philanthropic priorities or interests from newly appointed public officials. Consider the timing of your recommendations and think long term about when they might be most influential, as new, and perhaps more relevant, policy advocacy opportunities open in future cycles. Anticipating events like elections, setting target dates to demonstrate outcomes, and building cross-organizational coalitions are all ways to mitigate dwindling support.

- Focus on outcomes, not outputs. Prototypes might be measured against outputs like number of calls answered or applications reviewed. But these outputs don’t necessarily measure the quality of service provided to a user. The number of customer problems resolved or the rate of error-free applications may be more valuable measures. Understand the difference so you can focus on improving prototype outcomes and, ultimately, program outcomes.

Keep an eye on emerging opportunities

- Prototyping isn’t all about digital. It’s valuable (and increasingly common) to test non-digital programs, processes, or touchpoints. These can include paper communications, like fliers and letters about health insurance enrollment, or in-person interactions, like when a couple applies for a marriage license at City Hall.

- Use demonstration sites to imagine a future where a policy has already been implemented. Demonstration sites are local sites that serve as a “laboratory” to identify and solve problems that may arise during program implementation. San Francisco’s Safe Injection Demonstration Site sought to do just this. Like paper prototypes, the demonstration site was designed to quickly build empathy for the public’s experience within community and policy stakeholder groups. When paired with campaigns and long-term advocacy, demonstration sites can influence the framing and scope of policies.

- Agile delivery methods are being adopted in government. Agile practices—where teams build and release a product incrementally and incorporate feedback along the way—are often part of digital transformation initiatives. They allow agencies to take an iterative approach to improvement, strategically moving toward larger goals. Prototyping outside traditional administrative departments can create a safe space for failure. City-based innovation teams (e.g. San Francisco’s Office of Civic Innovation or Boston’s Office of New Urban Mechanics) can be more open to experimenting and allowing governments to incubate new ideas before pushing them to more traditional agencies. Philanthropies and new funding streams (e.g. the CARES act) can also offer opportunities for experimentation, incubation, and scaling with long-term sponsorship and dedicated financial resources.

At their core, policy prototypes get user feedback more quickly, and, in the long term, can ensure policy design choices meet their intended outcomes. They help practitioners test their assumptions and see how business processes and agency-public interactions come together before committing a large resource investment into policy implementation. The learning that results from every iteration is a powerful input to future efforts and ensures policy goals are fulfilled.

Reducing paperwork barriers with a touchpoint prototype

For Vermonters applying for public benefits, providing eligibility verification documents used to be a time-consuming process that required sending documents by mail or physically bringing them to state facilities. When a Nava team began working with the State of Vermont on integrating their enrollment and eligibility processes, the team’s early research showed that document verification was a common—and time consuming—touchpoint across all 37 health care and financial benefit programs that the Vermont Agency of Human Services (AHS) administers. Recognizing this as a high-value touchpoint to improve for Vermonters and the State, Nava quickly developed a prototype uploader tool that was tested with 50 Vermonters per month. With Vermonter feedback, the team was then able to iteratively introduce new features and also create more robust processes and training that supported staff in integrating the uploader into their program’s operations for all. Rather than attempt a large-scale benefit application redesign, improving one touchpoint that cross-cut multiple programs provided the unique opportunity to improve how Vermonters interact with State services. It became one part of a modular platform that helped the state continue modernizing and integrating healthcare and financial benefits. Introducing the document uploader drove a 44 percent decrease in the days to eligibility determination for Economic Services clients who needed to provide verification documents.