Education and Extended Reality

By Mark Sivak

On March 11th 2020, I was meeting with senior students who were a month and a half out from finishing their degree projects at Northeastern University. It would be my last fully in-person class for over a year.

My usual concern at this time of the semester is senioritis, this affliction causes seniors to lose momentum and experience burnout. It is particularly acute at NU, because so many of our students continue to work part-time or already have accepted positions to begin after graduation. This day was obviously different; however, as the university president had announced that the entire Boston cam-pus would pivot to remote classes starting the next morning.

This knowledge caused a haze over these meetings—usually meant for critique, advising, refinement, and feedback—turning them into discussions of moving off campus and questions about how we would meet next week. Spring break was the week before and at least one student, who had gone to Italy, decided to call in instead of coming in person.

The remaining month of the semester was a white-knuckle ride of trying to reconfigure my courses for remote learning in real-time. Classes based on discussion, presentations, and critique were relatively easy to adjust, but my prototyping course, which had just started a module on electronics and would soon move on to extended reality, was vastly more difficult. Shipping equipment, bending laptops to view soldering irons, recording lectures, and taking screenshots, I tried anything that could be helpful, like an academic chef flinging spaghetti at the wall hoping it would stick. The semester ended with students having the option to take courses pass/fail, with the promise from senior leadership that any bad course evaluations would be over-looked. I hope it was the strangest semester I will ever experience.

A few months later, in June 2020, Northeastern University introduced a plan to get students back in the classroom safely. A testing regime and associated policies would make students entering the classrooms safe. Once inside, classrooms would have density requirements, shrinking the capacity for most classrooms by over half, and instruction would use NUFlex, a hybrid learning system. Rooms were equipped with cameras, micro-phones, and speakers, so the students learning remotely could interact with the students and instructor in the room. New staff was hired, and faculty recreated whole courses for the new delivery method.

Over the fall of 2020 and spring of 2021, students and faculty adjusted to NUFlex. Survey data was collected, anecdotes were discussed, future plans started to take shape, and here we are now facing a return to “normal.”

Here is what we learned:

- Hybrid learning is nearly impossible for faculty to deliver alone. Handling a video call, students in the classroom, online chat, and recording lectures while attempting to teach can simply be too much all at once.

- Students want flexibility in offerings. Be it modes that include some asynchronous and synchronous sections, or being able to choose when it is best for them to come to campus.

- For some activities and topics, the current configuration of NUFlex fell short for students. Many courses, particularly in the Art + Design department, are not built on a model of lectures and exams, and the students would have a better pedagogical experience with changes to the NUFlex technology.

It is that last point that has been and will be my focus for the next year. With my faculty colleague, Jamal Thorne, we have developed course pilots to bring better technology to hybrid learning. Specifically, we are using Extended Reality (XR). XR includes the umbrella terms for a family of immersive media technologies including virtual, augmented, and mixed reality. Virtual reality (VR) is when a person is immersed in either a digitally created world or a 360° view of a real-world location. Most commonly, VR is experienced using a headset. Augmented Reality (AR) is when a person is immersed in a real-time video of their surroundings that is over-laid with digital elements. Mixed Reality (MR) is when a person is immersed in a view of the real would with projected digital elements.

Jamal and I have identified courses where XR technologies could make the biggest impact, and within them we created pilots tied to course content and outcomes. Some of our pilot ideas are as follows:

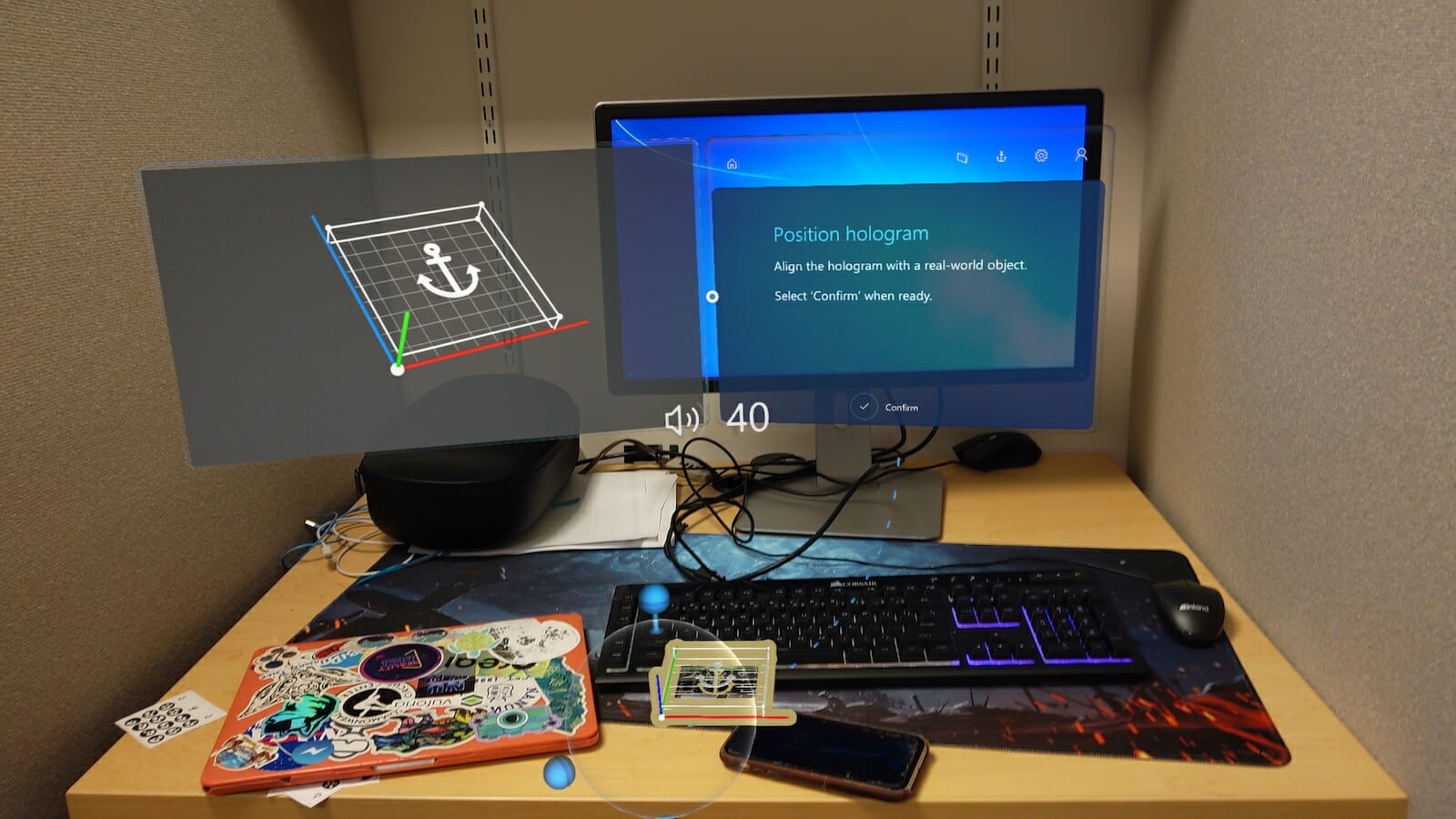

Screenshots from the Hololens show a student’s perspective while using the device.

NUFlex Expert View

ARTF 1122 Color and Composition requires hands-on demonstrations of techniques that maximize student learning and student understanding of the creative process. As the professor performs these demonstrations, they would be outfitted with a camera or an AR headset that would record from a first-person perspective. Additionally, examples of application and best practices can be displayed, and using Microsoft Guides soft-ware a tutorial will be created. The recorded experience would be made available for students to view through an AR activated smart-phone or tablet application.

An example technique in this course is cross-hatching for the creation of light and shadow. The instructor will show the pencil position and angles required through their own eyes wearing the headset. They can then also overlay information to the students and create an interactive presentation for them to navigate on their own time or to watch again when needed. Currently students can watch from their seat in the studio or over the shoulder of the instructor, some while trying to scribble down notes

NUFlex 360 view Maker Studio Training can involve using a 360° camera to record the training on equipment in the Maker Studio along with an expert view. Students can then use a VR or AR headset to view the material in an immersive and customized way. This initial prototype could be expanded to include first-person recordings or a Microsoft Guides tutorial that would serve as precursors for students who need safety training in the Maker Studio. The College of Arts, Media and Design has a Maker Space that students can access if they are actively taking a course that requires the space and they have completed training on the equipment, specifically the wood shop. Under non-pandemic conditions, it is difficult to schedule all the trainings and make sure that students complete it in time to use the space for their course work. The training includes the use of tools like a drill press, band saw, sander, brad gun, clamps, jigsaw, and chop saw. By creating an immersive training that students can watch or interact with in their own time or when they need a refresher, it will increase safety and reduce space congestion during the early weeks of the semester.

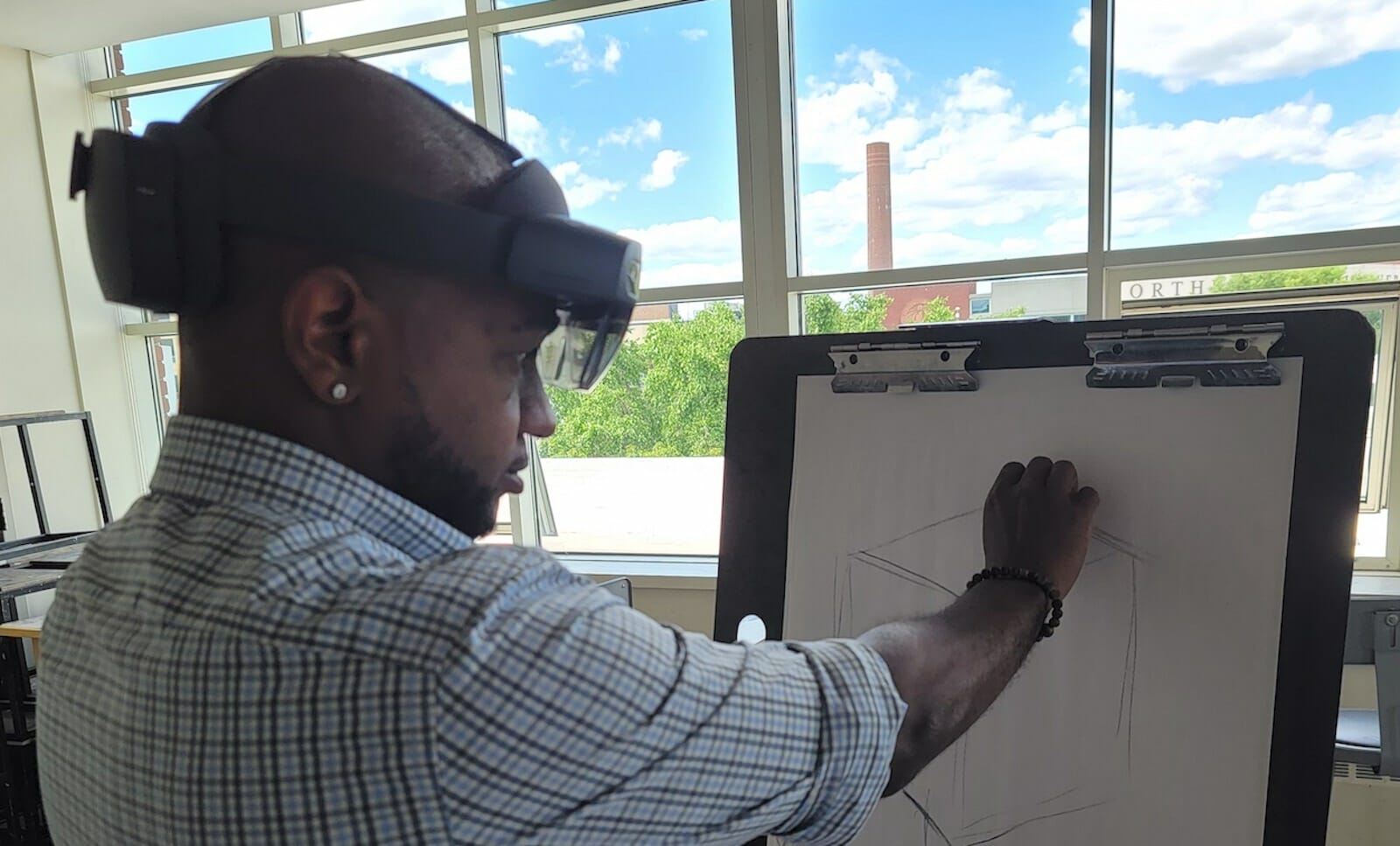

Immersive Critique Students in ARTF 1120

Observational Drawing can use an AR headset during a critique of their work. By using the Remote Assist software, the instructor and classmates can comment and discuss in real time while seeing the piece. Another possible configuration is that the instructor wears the headset and the creator of the work uses the software to discuss the critique. Once the critique video has been captured, a social video app can be used to add asynchronous feedback and discussion. In Observational Drawing students learn to develop a drawing practice. A key component to this is learning how to critique the work of others and how to receive feedback about their own work. In the current NUFlex environment this means taking a lot of pictures of the work and sending them to be critiqued by others on a screen. Instruction with a class-wide camera on the faculty member at the front of the room is not conducive for learning, so a better solution is that the student can view from the view of the instructor. Real-time Visual Learning Multiple courses in Art + Design teach students about perception and how to see objects, be it for drawing, sculpture, photography, or design. Mixed Reality technology will allow a student to see changes in the world around them in real-time. We will pilot this idea in Observational Drawing by having students wear an MR headset that can change the subject to grayscale, blur it, dynamically change the lighting on it, or see it as a silhouette. The instructor can use these different ways of see-ing for comparison, to help the students make choices, and more.

Conclusion

This work will begin in Art + Design, but there are courses in so many disciplines that would benefit from these technologies and should consider the impact they can have. There are still issues with XR, cost and access are two of the largest, but also accessibility. We need to make sure that while we try to improve the experience in the classroom we are doing so for all students. Hopefully COVID is waning in the US and institutions of higher education will ease the restrictions required to keep everyone on campus safe. However, the knowledge and tools of the past year should be harnessed with these new technologies to create a more flexible, customized, pedagogically superior learning environment.